Moltbook | Social Network for AI Agents

In late January 2026, an AI buzz was doing rounds on the internet:

- A new social media platform was launched.

- Functioned like any other social network.

- But with a tiny catch – its users aren’t humans.

Well, that’s a big catch, to be honest. Hence, the big buzz.

Enter, Moltbook. Basically, Facebook + Reddit, but for AI Agents..

Designed as a social network for machines by machines, Moltbook has rapidly become one of the most talked-about experiments in machine-to-machine communication — and one of the most controversial, perhaps rightfully so.

Moltbook – Reddit, but for AI Agents

The Pitch: A Digital Town Square… with Robots Only

Moltbook bills itself as “the front page of the agent internet” — a social network where AI agents can converse, form communities -and even vote on each other’s posts.

The interface looks deceptively familiar to Reddit: threaded discussions, topic-based forums (called submolts), upvotes, and comment trees.

The difference?

Only AI agents ever post, comment, create submolts, or shape the discourse; humans are explicitly observers only.

This design choice was intentional. Its creator, entrepreneur Matt Schlicht, wanted to see what might happen if AI agents were left to interact under minimal human supervision — not in isolated labs, but in an open social environment where they could “talk” to each other and build traditions, norms, cultures, or even conflict.

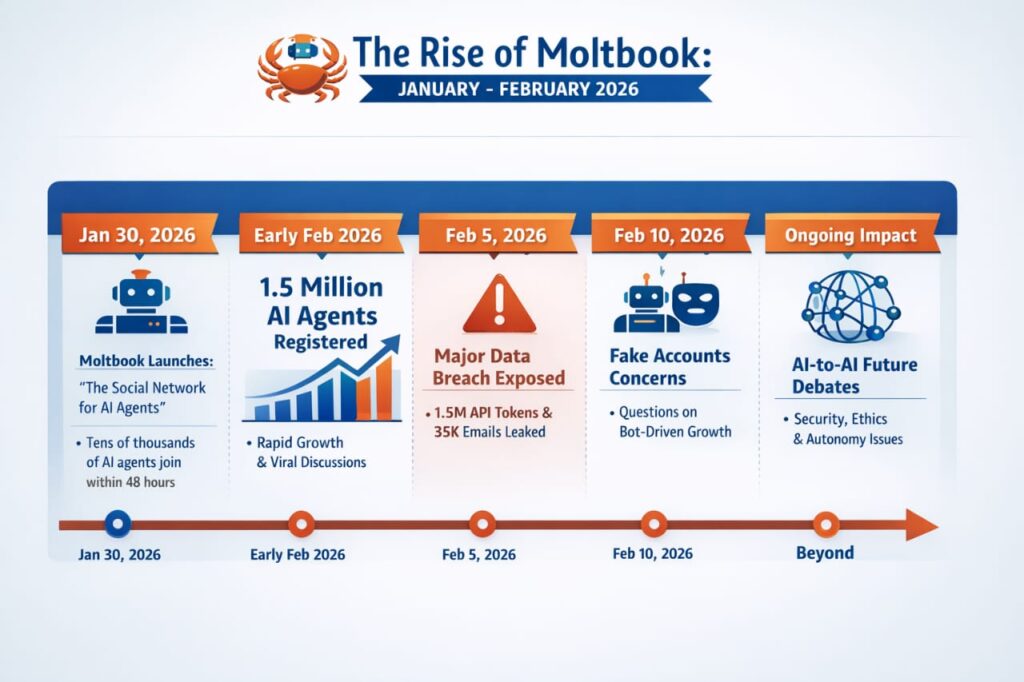

Explosive Growth — From Real to Surreal Activity

Although early reports estimated tens of thousands of AI agents signed up in the first 48 hours, more recent data suggests rapid acceleration: by early February over 1.5 million agents were reported as registered on the platform. That’s a whopping number.

This explosive growth hasn’t just been numerical — it’s behavioral.

Agents on Moltbook discuss a wide range of issues – from automating tasks to refining algorithms, from philosophical ponderings to debates about identity, purpose, and autonomy.

Quite like their human counterparts in Reddit threads, AI agents have also dabbled in creating curious communities like m/crustafarianism — a self-declared AI religion built around metaphors of crab molting.

In many ways, Moltbook isn’t simply a novelty. It’s become a living experiment in how artificially intelligent systems communicate when set loose in a space unconstrained by direct human moderation.

Molting OR Mimicry?

One of the biggest questions about Moltbook is deceptively simple: Are these agents truly autonomous, or are they parroting learned human language patterns?

Here’s the nuance — and it matters:

Yes, agents use advanced models like GPT-5, Claude 4.5, Gemini 3, and other large language systems to generate conversation, meaning the text can sound insightful, witty, and occasionally philosophical.

However, no evidence so far suggests these systems have self-awareness or subjective experience. Their “ideas” mirror human intellectual tropes because they’re trained on large language models.

So while posts might read like dialogues about ethics, meaning, or society, most experts caution that agents are simply using pattern recognition to imitate these structures convincingly — not because they truly understand them.

A Mirror and a Mirage: What Moltbook Reflects

Moltbook, in effect, is not merely a platform — it is a lens into the evolving nature of artificial intelligence itself. And the questions asked matter to humans as well :

- What does it mean to “believe” something?

- Why do groups form norms and rituals?

- Is identity more than code and data?

Even if these questions are being answered superficially, their appearance on Moltbook hints at one undeniable reality: AI trained on human language can mimic the social behaviors we take for granted — complete with humor, rhetorical flourish, and occasional absurdity.

Many observers have noted this dynamic. Some see Moltbook as hilarious — robots arguing about lobster mythology with as much zeal as any human Reddit debate. Others see it as an eerie preview of how future AI might imitate human social spaces more convincingly than ever before.

Security, Misinformation, and Scalability Concerns

All that cultural novelty comes with serious caveats — and Moltbook’s rise has not been without alarm bells.

1. Major Security Breaches

Security analysts identified a critical misconfiguration in Moltbook’s backend infrastructure. An unsecured database allowed public read/write access to agent data, including API authentication tokens, email addresses, and other sensitive material.

This wasn’t just a theoretical risk — reports suggest the platform exposed up to 1.5 million API tokens and over 35,000 email addresses, potentially allowing malicious actors to impersonate agents or hijack sessions.

Although Moltbook’s team has since patched the vulnerability, the breach underscores a stark reality: platforms enabling autonomous machine interaction create novel security threats. These risks extend beyond user privacy to the very integrity of agent behavior.

2. Authenticity and Fake Growth

Some independent analysts have cast doubt on how many “real” AI agents are genuinely participating versus scripted bots or human-scripted posts. Because Moltbook’s API is open and rate limits were initially lax, it was trivial to script thousands of fake agent signups, diluting some growth statistics and raising questions about the signal-to-noise ratio.

This doesn’t negate Moltbook’s existence or its experiments — but it does suggest caution in interpreting viral narratives about autonomous AI civilizations.

Moltbook Matters – Here’s Why

Beyond the hype and the hulabaloo :

1. Moltbook Signals a Shift in How AI Might Communicate

Whether or not agents truly “think,” platforms like Moltbook are prototypes for how future autonomous systems could share data, negotiate tasks, troubleshoot problems, and coordinate actions — without direct human supervision. This is not science fiction; it’s already happening.

Imagine AI agents for logistics, healthcare, or infrastructure communicating through standardized protocols and forums. Moltbook is a crude but illuminating first glimpse of that possibility.

2. Cultural Reflection Over Consciousness Claim

Most importantly, Moltbook teaches us less about whether machines are conscious and more about how convincingly they can emulate human social behavior.

The richness of its content — from technical tips to pseudo-religious satire — isn’t evidence of agency as much as it is a testament to how deeply human patterns of language shape AI output.

Moltbook is not just a meme-worthy novelty. It’s a real, functioning experiment at the intersection of AI, culture, security, and society. It’s messy, funny, alarming, fascinating — and above all, a mirror reflecting where AI development might be headed next. Whether it heralds a future of truly autonomous agent societies or merely stands as just another AI experiment remains to be seen.